Research Briefs

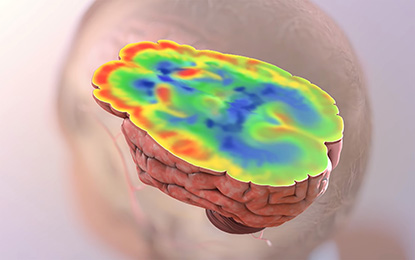

NIA: HIGHER BRAIN GLUCOSE LEVELS MAY MEAN MORE-SEVERE ALZHEIMER DISEASE

CREDIT: NIA

Scientists in the National Institute on Aging found potential connections between problems with how the brain processes glucose and Alzheimer disease pathology and symptoms. The red-colored areas show areas of preserved glucose utilization; the blue-colored areas indicate brain regions with low levels of glucose uptake. The latter is a distinctive and early feature of Alzheimer disease. NIA’s current findings provide an explanation for why glucose uptake may be lower in Alzheimer disease by showing that the biochemical reactions necessary for glycolysis (the breakdown of glucose) appear to be impaired.

For the first time, scientists have found a connection between abnormalities in how the brain breaks down glucose and the severity of the signature amyloid plaques and tangles in the brain, as well as the onset of eventual outward symptoms, of Alzheimer disease. The study, led by NIA researchers, involved looking at brain tissue samples at autopsy from participants in the Baltimore Longitudinal Study of Aging (BLSA), one of the world’s longest-running scientific studies of human aging. The BLSA tracks neurological, physical, and psychological data on participants over several decades.

Researchers measured glucose concentrations in different brain regions, some of them vulnerable to Alzheimer-disease pathology, such as the frontal and temporal cortex, and some that are resistant, such as the cerebellum. They analyzed three groups of BLSA participants: those with Alzheimer symptoms during life and with confirmed Alzheimer-disease pathology (beta-amyloid protein plaques and neurofibrillary tangles) in the brain at death; healthy control subjects; and individuals without symptoms during life but with significant amounts of Alzheimer pathology found in the brain post mortem.

The researchers found distinct abnormalities in glycolysis, the main process by which the brain breaks down glucose, with evidence linking the severity of the abnormalities to the severity of Alzheimer-disease pathology. Lower rates of glycolysis and higher brain glucose concentrations correlated to more-severe plaques and tangles found in the brains of people with the disease. More-severe reductions in brain glycolysis were also related to the expression of symptoms of Alzheimer disease during life, such as problems with memory.

Although similarities between diabetes and Alzheimer disease have long been suspected, they have been difficult to evaluate, because insulin is not needed for glucose to enter the brain or to get into neurons. The team tracked the brain’s usage of glucose by measuring ratios of the amino acids serine, glycine, and alanine to glucose, allowing them to assess rates of the key steps of glycolysis. They found that the activities of enzymes controlling these key glycolysis steps were lower in subjects with Alzheimer disease than in normal brain-tissue samples. Furthermore, lower enzyme activity was associated with more severe Alzheimer pathology in the brain and the development of symptoms. Next, they used proteomics–the large-scale measurement of cellular proteins–to tally concentrations of glucose transporter protein 3 (GLUT3) in neurons. They found that GLUT3 concentration were lower in brains with Alzheimer pathology than in normal brains and that these concentrations were also connected to the severity of tangles and plaques. Finally, the team checked blood glucose concentrations in study participants years before they died. They found that greater increases in blood glucose concentrations correlated with greater brain glucose concentrations at death.

The researchers cautioned that it is not yet completely clear whether abnormalities in brain glucose metabolism are definitively linked to the severity of Alzheimer-disease symptoms or the speed of disease progression. The next steps for the team include studying abnormalities in other metabolic pathways linked to glycolysis to determine how they may relate to Alzheimer’s pathology in the brain. (NIA authors: Y. An, V.R. Varma, C.W. Chia, J.M. Egan, L. Ferrucci, and M. Thambisetty, Alzheimers Dement DOI:https://doi.org/10.1016/j.jalz.2017.09.011)

NIAID: INFECTIOUS PRION PROTEIN FOUND IN SKIN OF CJD PATIENTS

NIAID scientists and collaborators at Case Western Reserve University School of Medicine (Cleveland) have detected abnormal prion protein in the skin of nearly two dozen people who died from Creutzfeldt-Jakob disease (CJD). The scientists also exposed a dozen healthy mice to skin extracts from two of the CJD patients, and all developed prion disease. The study results raise questions about the possible transmissibility of prion diseases via medical procedures involving skin and about whether skin samples might be used to detect prion disease. The researchers stressed that the prion-seeding potential found in skin tissue is significantly less than what they have found in studies using brain tissue.

CJD is an incurable—and ultimately fatal—transmissible, neurodegenerative disorder in the family of prion diseases. Prion diseases originate when normally harmless prion protein molecules become abnormal and gather in clusters and filaments in the human body and brain. The reasons for this process are not fully understood. The accumulation of these clusters has been associated with tissue damage that leaves spongelike holes in the brain. Human prion diseases include fatal insomnia; kuru; Gerstmann-Straussler-Scheinker syndrome; and variant, familial, and sporadic CJD. Other prion diseases include scrapie in sheep; chronic wasting disease in deer, elk, and moose; and bovine spongiform encephalopathy, or mad-cow disease, in cattle.

Most people associate prion diseases with the brain, although scientists have found abnormal infectious prion protein in other organs, including the spleen, kidney, lungs, and liver. Sporadic CJD is known to be transmissible by invasive medical procedures involving the central nervous system and cornea, but transmission via skin had not been a common concern.

The study authors say the results should generate discussion about potential surgical-instrument contamination and the risk associated with procedures involving CJD patients. The NIAID group is doing further studies of when and where the pathological prion protein appears in skin and how to effectively inactivate its infectious forms. (NIAID authors: C.D. Orrú, B.R. Groveman, and B. Caughey, Sci Transl Med 9:eaam7785, 2017; DOI:10.1126/scitranslmed.aam7785)

NINDS, NCATS: HIBERNATING GROUND SQUIRRELS PROVIDE CLUES TO NEW STROKE TREATMENTS

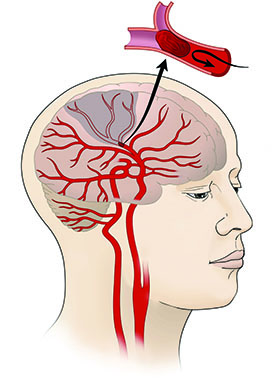

CREDIT: NINDS

A potential new treatment for stroke patients: A team of NINDS scientists identified a molecule that may reduce stroke-induced brain damage. Shown: Close-up of blood vessel with clot.

Like people suffering from ischemic strokes, ground squirrels experience dramatically reduced blood flow to their brains when they hibernate, depriving cells of life-sustaining oxygen and glucose. Yet the squirrels awaken with no ill effects because they rev up a neuroprotective pathway called SUMOylation during their extended naps. SUMOylation occurs when an enzyme attaches a molecular tag called a small ubiquitin-like modifier (SUMO) to a protein, altering its activity and location in the cell. Other enzymes called SUMO-specific proteases can then detach those tags, thereby decreasing SUMOylation.

A team of NINDS researchers and NIH-funded scientists has recently identified a potential drug that could grant the same resilience to the brains of ischemic-stroke patients by mimicking the cellular changes that protect the brains of the squirrels.

The researchers used a series of experiments to whittle a pool of more than 4,000 molecules from the NCATS small-molecule collections down to two nontoxic chemicals that boost SUMOylation in rat cells by blocking an enzyme, SUMO-specific protease 2, that decreases SUMOylation. The two compounds—ebselen and 6-thioguanine—also kept those rat cells alive in the absence of oxygen and glucose. A final experiment showed that ebselen could boost SUMOylation in the brains of healthy mice more than a placebo. The researchers now plan to test whether ebselen can protect the brains of animal models of stroke. (NINDS authors: J.D. Bernstock, D.Ye, Y.-J. Lee, and J.M. Hallenbeck; NCATS authors: A. Yasgar, J. Kouznetsova, A. Jadhav, W. Zheng, and A. Simeonov, FASEB J DOI:10.1096/fj.201700711R)

NIDA: SAFE FOR MOTHERS TO BREASTFEED WHILE BEING TREATED FOR OPIOID ADDICTION

Scientists at NIDA investigated the safety of breastfeeding when new mothers are taking buprenorphine, a medication used to treat opioid addiction. Although there are many known benefits of breastfeeding for women and infants, there was little evidence about the concentration and activity of buprenorphine in human breast milk. Using liquid chromatography–mass spectrometry, researchers measured the concentrations of buprenorphine and its metabolites in human breast milk and maternal plasma in 10 buprenorphine-managed mothers. In addition, plasma samples were taken from nine infants when they were 14 days old. The results indicated that buprenorphine concentrations are low in the breast milk and blood of the mothers and low or undetectable in the breastfed infants. The study’s findings support the recommendation that it is safe for women to breastfeed while maintaining buprenorphine treatments. Further study is needed, however, to understand the correlation between maternal dose, maternal plasma, and human-milk buprenorphine concentrations. (NIH authors: M.J. Swortwood, A.J. Barnes, and K.B. Scheidweiler, J Hum Lact 32:675–681, 2016; DOI:10.1177/0890334416663198)

NIEHS: PUTTING THE BRAKES ON PROTEIN PRODUCTION

New research from NIEHS scientists suggests that intragenic enhancers, which occur within genes rather than outside genes, act like brakes to slow transcription of the gene. The scientists showed that deletion of intragenic enhancers increases the expression of the host gene and can alter cell fate, with important implications. Enhancer mutations are associated with many types of cancer; the research is aimed at determining whether any cancers involve the activation of a cancer-causing gene due to the loss of the enhancer-mediated suppression function. Alternatively, if the protein in question prevents tumor growth, then having less of it may increase the risk of developing cancer. According to the researchers, the discovery of an unanticipated role for enhancers will break new ground in the field of transcription and alter the conventional view of enhancers as transcriptional activators. (NIEHS authors: S. Cinghu, P. Yang, J.P. Kosak, A.E. Conway, D. Kumar, A.J. Oldfield, K. Adelman, and R. Jothi, Mol Cell 68:104–117.e6, 2017).

The findings have been highlighted in Nature Reviews Genetics and Nature Reviews Molecular Cell Biology

NICHD: EXPOSURE TO AIR POLLUTION IN EARLY PREGNANCY MAY BE LINKED TO MISCARRIAGE

Exposure to common air pollutants, such as ozone and fine particles, may increase the risk of early pregnancy loss, according to a study by NICHD scientists. Ozone is a highly reactive form of oxygen that is a primary constituent of urban smog. Researchers followed 501 couples attempting to conceive between 2005 and 2009 in Michigan and Texas. The investigators estimated the couples’ exposures to ozone based on pollution concentrations in their residential communities. Of the 343 couples who achieved pregnancy, 97 (28 percent) experienced an early pregnancy loss—all before 18 weeks. Couples with higher exposure to ozone were 12 percent more likely to experience an early pregnancy loss, whereas couples exposed to particulate matter (small particles and droplets in the air) were 13 percent more likely to experience a loss. The researchers do not know why exposure to air pollutants might cause pregnancy loss, but it could be related to increased inflammation of the placenta and oxidative stress, which can impair fetal development. The findings suggest that pregnant women may want to consider avoiding outdoor activity during air-quality alerts, but more research is needed to confirm this association. (NICHD authors: S. Ha, R. Sundaram, G.M. Buck Louis, C. Nobles, I. Seeni, and P. Mendola, Fertil Steril DOI:10.1016/j.fertnstert.2017.09.037)

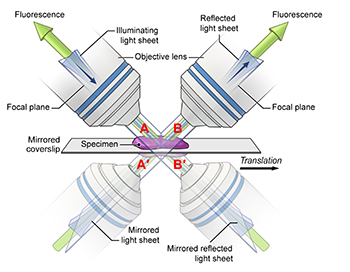

NIBIB: RESEARCHERS CREATE HIGHER-QUALITY PICTURES OF BIOSPECIMENS

CREDIT: YICONG WU, NIBIB

In this diagram, the mirrored coverslip allows for four simultaneous views.

Researchers from NIBIB and the University of Chicago improved the speed, resolution, and light efficiency of an optical microscope by switching from a conventional glass coverslip to a reflective, mirrored coverslip and applying new computer algorithms to process the resulting data. The team has spent the past few years developing optical microscopes that produce high-resolution images at very high speed. After the lab develops each new microscope, it releases the plans and software for free, so any researcher can replicate the advances made at NIH. In 2013, the team developed the dual-view inverted selective-plane illumination microscope equipped with two lenses so it obtains two views of the sample instead of just one. Just as using two eyes provides better depth and three-dimensional perception than using only one eye, the dual-view microscope enables three-dimensional (3-D) imaging with greater clarity and resolution than traditional single-view imaging.

In 2016, the team added a third lens and showed that this additional view can further improve light efficiency and resolution in 3-D imaging. But once three lenses were incorporated, it became increasingly difficult to add more. The lenses are bulky and need to be close to the samples to clearly image the detailed subcellular structure within a single cell or the neuronal development within a worm embryo. The space around the sample becomes more and more limited with each additional lens. The researchers’ solution was conceptually simple and relatively low-cost. Instead of trying to find ways to stuff in more lenses, they use mirrored coverslips. One complication is that both the conventional and the reflected views contain an unwanted background generated by the light source. To deal with this problem, the NIH researchers collaborated with University of Chicago researchers, who helped create computer-processing software to identify and remove the unwanted background and clarify the image. The researchers hope that in the future this technique may be adapted to other forms of microscopy.

(NIBIB authors: Y. Wu, A. Kumar, E. Ardiel, P. Chandris, R. Christensen, I.N. Rey-Suarez, M. Guo, H.D. Vishwasrao, J. Chen, and H. Shroff, Nat Commun 8:article number 1452, 2017, DOI:10.1038/s41467-017-01250-8)

NIAID: GENE-BASED ZIKA VACCINE IS SAFE AND IMMUNOGENIC IN HEALTHY ADULTS

CREDIT: NIH

Results from two phase 1 clinical trials show an experimental Zika vaccine developed by NIAID scientists is safe and induces an immune response in healthy adults. Investigators from NIAID’s Vaccine Research Center (VRC) and Laboratory of Viral Diseases developed the investigational vaccine, which includes a small, circular piece of DNA called a plasmid. Scientists inserted genes into the plasmid that encode two proteins found on the surface of the Zika virus. After the vaccine is injected into muscle, the body produces proteins that assemble into particles that mimic the Zika virus and trigger the body to mount an immune response.

NIAID developed two different plasmids for clinical testing: VRC5288 and VRC5283. The plasmids are nearly identical, but they differ in specific regions of the genes that might affect protein expression and therefore immunogenicity.

In August 2016, NIAID initiated phase 1 trials of the VRC5288 plasmid in 80 healthy volunteers age 18 to 35 years at three sites: the NIH Clinical Center; the Center for Vaccine Development at the University of Maryland School of Medicine’s Institute for Global Health (Baltimore); and Emory University (Atlanta). Participants received a four-milligram dose via a needle and syringe injection into the arm muscle. Participants received either two or three doses of the vaccine at varying time intervals, all at least four weeks apart.

In December 2016, NIAID initiated a separate trial testing the VRC5283 plasmid. This study took place at the NIH Clinical Center and enrolled 45 healthy volunteers age 18 to 50 years. All participants received either two or three four-milligram doses of the vaccine at varying time intervals. Trial investigators also tested different delivery regimens to see which was the most immunogenic. Some participants received the vaccine via a needle and syringe, while others received the vaccine from a needle-free injector that pushed fluid into the arm muscle. Additionally, some participants had the total vaccine dose divided, with one shot administered in each arm.

The vaccinations were safe and well tolerated in both trials, although some participants experienced mild to moderate reactions such as tenderness, swelling, and redness at the injection site. NIAID investigators concluded that VRC5283 showed the most promise and advanced it into an international efficacy trial in March 2017. The trial aims to enroll at least 2,490 healthy participants age 15 to 35 years in areas of confirmed or potential active mosquito-transmitted Zika infection in the continental United States, Puerto Rico, and Central and South America. The researchers are further evaluating the investigational vaccine’s safety and ability to stimulate an immune response, and they will attempt to determine if it can prevent disease caused by Zika infection. (NIAID authors: M.R. Gaudinski, K.V. Houser, J.R. Mascola, T.C. Pierson, J.E. Ledgerwood, G.L. Chen, et al.; Lancet DOI:10.1016/S0140-6736(17)33105-7)

NIAID: CASES OF UNEXPLAINED ANAPHYLAXIS LINKED TO RED-MEAT ALLERGY

CREDIT: NIAID

An adult female lone-star tick (Amblyomma americanum) climbs on a plant. Bites from the juvenile form of this species, sometimes called seed ticks, are linked to the development of red-meat allergy.

While rare, some people experience recurrent episodes of anaphylaxis—a life-threatening allergic reaction that causes symptoms such as the constriction of airways and a dangerous drop in blood pressure—for which the triggers are never identified. Recently, researchers at NIAID found that some patients’ seemingly inexplicable anaphylaxis was actually caused by an uncommon allergy to a molecule found naturally in red meat. The researchers note that the allergy, which is linked to a history of a specific type of tick bite, may be difficult for patients and health-care teams to identify.

Of the 70 study participants (46 females and 24 males; age range 15 to 70 years) evaluated for unexplained frequent anaphylaxis, six males (ages 41 to 70 years) tested positive for an allergy to galactose-alpha-1,3-galactose, or alpha-gal, a sugar molecule found in beef, pork, lamb, and other red meats. The six men all had immunoglobulin E antibodies—immune proteins associated with allergy—to alpha-gal in their blood. After implementing diets free of red meat, none of them experienced anaphylaxis in the 18 months to three years during which they were followed. While the prevalence of allergy to alpha-gal, or “alpha-gal syndrome,” is not known, researchers have observed that it occurs mostly in people living in the Southeast region of the United States and certain areas of New York, New Jersey, and New England. This distribution may occur because most people with an allergy to alpha-gal, including all six participants evaluated at NIH, have a history of bites from juvenile lone-star ticks. (NIH authors: M.C. Carter, K.N. Ruiz-Esteves, and D.D. Metcalfe, Allergy DOI:10.1111/all.13366)

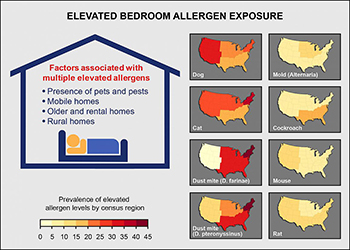

NIEHS, NIAID: ALLERGENS WIDESPREAD IN LARGEST STUDY OF U.S. HOMES

CREDIT: NIEHS

Factors contributing to elevated bedroom allergen concentrations include pets and pests, type of housing, and living in rural areas. Individual allergens vary by geographic area.

In a recent study, NIEHS researchers reported that over 90 percent of homes had three or more detectable allergens, and 73 percent of homes had at least one allergen at elevated concentration. Using data from the 2005–2006 National Health and Nutrition Examination Survey, the researchers studied levels of eight common allergens–cat, dog, cockroach, mouse, rat, mold, and two types of dust mite allergens–in the bedrooms of nearly 7,000 U.S. homes. They found that the pets and pests were associated with high concentrations of indoor allergens. Housing characteristics also mattered–elevated exposure to multiple allergens was more likely in mobile homes, older homes, rental homes, and homes in rural areas. For individual allergens, exposure varied greatly with age, sex, race, ethnicity, and socioeconomic status. Differences were also found among geographic locations and climatic conditions. For example, elevated dust-mite allergen concentrations were more common in the South and Northeast and in regions with a humid climate. Concentrations of cat and dust-mite allergens were also found to be higher in rural areas than in urban settings. (NIEHS authors: P.M. Salo, P.J. Gergen, and D.C. Zeldin, J Allergy Clin Immunol DOI:https://doi.org/10.1016/j.jaci.2017.08.033)

NIDA: BRAIN PATHWAY INVOLVED IN DRUG RELAPSE

CREDIT: MARCO VENNIRO, NIDA

A team of researchers at NIDA has identified what may be the crucial brain circuit involved in relapse to drug use when an effective behavioral treatment for drug addiction, known as contingency management, is discontinued. Contingency management uses non-drug rewards, such as cash stipends, prizes, or coupons for retail goods, to encourage people to remain drug-free. Most patients, however, relapse when they no longer receive the alternative reward. The researchers used an animal model in which rats voluntarily abstain from drug self-administration when given food rewards. In this model, the rats choose to abstain from methamphetamine or heroin when an alternative non-drug reward is available, but relapse to drug-seeking when the alternative reward is removed. Using chemogenetic manipulations, electron microscopy, and other techniques, the scientists identified the anterior insular cortex–to–central amygdala nerve path as critical to the relapse process. These findings provide insights into the brain mechanisms underlying relapse after successful contingency-management treatment and identify a potential novel target for relapse prevention using brain-stimulation methods. (NIH authors: M. Venniro, M. Zhang, L.R. Whitaker, S. Zhang, B.L. Warren, J.M. Bossert, M. Morales, and Y. Shaham1, Neuron 96:414–427, 2017)

NIDA: BRAIN NETWORKS PREDICT DRUG RELAPSE WITH COCAINE

NIDA researchers identified a resting-state brain circuit whose functional connectivity predicts the likelihood of relapse to cocaine use. Previous neuroimaging studies focused on single-imaging modalities to understand the neural basis of cocaine addiction. However, there were no assessments of the relation between neurological structural and functional differences. In the current investigation, researchers used resting-state functional connectivity studies to characterize addiction at a functional circuit or network level. They compared functional magnetic imaging scans of 67 control subjects (non-cocaine users) and 64 non-treatment-seeking cocaine users. The data showed that differences in cortical thickness are associated with altered functional circuits. Cocaine-dependent subjects had weaker connectivity in eight resting-state networks than the nonusers did. The resting-state networks play a critical role in the brain’s functional organization and the ability to process various stimuli. The strength of these networks indicates the degree of coordination among the component structures. Additionally, the scientists found that connectivity levels in a specific brain network that links the temporal pole and medial prefrontal cortex were more than 70 percent accurate in predicting relapse in 45 cocaine-dependent individuals who had completed a residential rehabilitation program. This brain circuit contributes to social and emotional functions that are often altered by cocaine use. Ultimately, the study results may help tailor treatment programs and therapies for individuals with cocaine-use disorders. (NIH authors: X. Geng, Y. Hu, H. Gu, B.J. Salmeron, E.A. Stein, and Y. Yang; Brain 140:1513–1524, 2017; DOI:10.1093/brain/awx036)

NIDA: OPIOID-TREATMENT DRUGS HAVE SIMILAR OUTCOMES ONCE PATIENTS INITIATE TREATMENT

A study comparing the effectiveness of two pharmacologically distinct medications used to treat opioid-use disorder–a buprenorphine-naloxone combination and an extended-release naltrexone formulation–shows similar outcomes once medication treatment is initiated. Until now, these two regimens have never been compared head to head in the United States, so there have never been the comparative-effectiveness data needed to make informed choices. Among active opioid users, however, it was more difficult to initiate treatment with the naltrexone. Study participants were dependent on nonprescribed opioids, 82 percent of them on heroin and 16 percent on pain medications. The research was conducted at eight sites within the NIDA Clinical Trials Network. Opioid-dependent adults (575) were randomized to the buprenorphine combination or the naltrexone formulation, and they were followed for up to 24 weeks of outpatient treatment. Study sites differed in their detoxification approaches and in their typical inpatient length of stay. Buprenorphine-naloxone was given daily as a sublingual film (under the tongue), whereas naltrexone was a monthly intramuscular injection. Adverse events, including overdoses, were tracked.

“Studies show that people with opioid dependence who follow detoxification with no medication are very likely to return to drug use, yet many treatment programs have been slow to accept medications that have proven to be safe and effective,” said NIDA Director Nora D. Volkow. “These findings should encourage clinicians to use medication protocols, and these important results come at a time when communities are struggling to link a growing number of patients with the most effective individualized treatment.” (NIH authors: D. Liu and G. Subramaniam, Lancet DOI:http://dx.doi.org/10.1016/S0140-6736(17)32812-X)

NICHD, NCI: OBESITY DURING PREGNANCY MAY LEAD DIRECTLY TO FETAL OVERGROWTH

Obesity during pregnancy—independent of its health consequences such as diabetes—may account for the higher risk of giving birth to an atypically large infant, according to researchers at NICHD and NCI. In the current study, researchers analyzed ultrasound scans taken throughout pregnancy of more than 2,800 pregnant women: 443 obese women with no accompanying health conditions such as diabetes; and more than 2300 non-obese women. Beginning in the 21st week of pregnancy, ultrasound scans revealed that for fetuses of obese women, the femur (thigh bone) and humerus (upper arm bone) were longer than those of the fetuses of non-obese women. The differences between fetuses of obese and non-obese women continued through the 38th week of pregnancy. For fetuses in the obese group, the average femur length was 0.8 millimeters longer (about 0.03 inches) than for those in the non-obese group, and humerus length was about 1.1 millimeters longer (about 0.04 inches). Average birth weight was about 100 grams (about 0.2 pounds) heavier in the obese group. Moreover, infants born to obese women were more likely to be classified as large for gestational age (birth weight above the 90th percentile) than infants born to non-obese women.

The study could not determine exactly why the fetuses of obese women were larger and heavier than fetuses in the non-obese group. The researchers theorize that because obese women are more likely to have insulin resistance (difficulty using insulin to lower blood sugar), higher blood sugar concentrations could have promoted overgrowth in their fetuses. The authors pointed out that earlier studies indicated that the higher risk of overgrowth seen in newborns of obese women may predispose these infants to obesity and cardiovascular disease later in life. They called for additional studies to follow the children born to obese women to determine what health issues they may face. (NIH authors: C. Zhang, M.L. Hediger, P.S. Albert, J. Grewal, S. Kim, K.L. Grantz, and G.M. Buck Louis, JAMA Pediatr DOI:10.1001/jamapediatrics.2017.3785)

NIAID: CELLPHONE-BASED MICROSCOPE CAN TREAT RIVER BLINDNESS

River blindness, or onchocerciasis, is a disease caused by a parasitic worm found primarily in Africa. The worm (Onchocerca volvulus) is transmitted to humans as immature larvae through the bites of infected black flies. Symptoms of infection include intense itching and skin nodules. Left untreated, river blindness may lead to infections in the eye and thus may cause vision impairment that leads to blindness. Mass distribution of ivermectin is currently used to treat onchocerciasis. However, this treatment can be fatal when a person has high blood concentrations of another filarial worm, Loa loa. In a recent study, scientists at NIAID and other organizations described how a cell-phone-based videomicroscope can provide fast and effective testing for L. loa parasites in the blood, allowing these individuals to be protected from the adverse effects of ivermectin.

In the new study, 16,259 volunteers in 92 villages in Cameroon where both L. loa and O. volvulus are commonly found provided finger-prick blood samples. These samples were then tested for L. loa using the LoaScope, a small microscope that incorporates a cell phone. Developed by a team led by researchers from NIAID and the University of California, Berkeley, the LoaScope returns test results in less than three minutes. Volunteers who were not infected or who had low-level L. loa infections were given standard ivermectin treatment and observed closely for six days afterward. Volunteers with high numbers of L. loa parasites in their blood did not receive ivermectin. Using this strategy, 15,522 study volunteers were successfully treated with ivermectin without serious complications. Nearly 1,000 participants experienced mild adverse effects after ivermectin treatment. According to the study authors, the LoaScope could be a valuable approach in the fight against river blindness by effectively targeting populations for ivermectin treatment and protecting dually infected patients from complications of inadvertent ivermectin administration. (NIH authors: A.D. Klion and T.B. Nutman, NEJM DOI:10.1056/NEJMoa1705026)

This page was last updated on Friday, April 8, 2022