2018 Research Festival: Virtual Reality

NIH Researchers Showcase VR’s Potential for Biomedicine

Virtual reality (VR) systems have hit a sweet spot. Enhancements in resolution and interactive capacity have come together at a reasonable price point. Judging from the range of VR demos at the NIH Research Festival, the NIH community has plenty of ideas for tapping into the technology’s biomedical potential. The NIH Library and the Virtual and Augmented Reality Interest Group (VARIG) teamed up to provide a variety of VR demos ranging from viewing molecules to biomedical-training simulations.

CREDIT: VICTOR CID, NLM

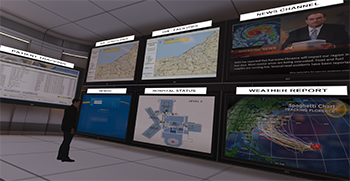

Attendees were at a VR emergency-operations center taking in the flood of information about Hurricane Florence.

“VR provides an immersive experience that keeps people from getting distracted by real life, and that’s been a game changer for training applications,” said Victor Cid, senior computer scientist for the National Library of Medicine’s Disaster Information Management Research Center. He developed a prototype VR-based training system to conduct hospital disaster-management exercises, which are critical for helping health-care facilities prepare for disasters. As Hurricane Florence loomed off the coast of the Carolinas, festival attendees found themselves at a VR emergency-operations center weighing options for a hospital in the path of the storm.

Other systems are exploring VR’s empathy-building potential. The National Eye Institute showcased a VR simulation of what vision is like for people with cataracts or age-related macular degeneration, two of the most common conditions leading to vision loss. Users experience how difficult each disorder makes shopping in a supermarket and seeing at night in an urban setting. A Google cardboard version that turns your smartphone into an inexpensive VR viewer is in development. The simulator can educate caregivers and may even motivate people to take steps to keep their eyes healthy.

CREDIT: NEI

Shopping in a grocery store is complicated by the loss of central vision from age-related macular degeneration, as this view from the NEI eye-disease simulator shows.

The Zebrafish Brain Browser, designed in Harold Burgess’s lab in the Eunice Kennedy Shriver National Institute of Child Health and Human Development, uses the open-source platform Extensible 3-D, enabling users to interact with imaging data from the fish’s brain. Those data include volumetric rendering of gene expression patterns in transgenic fish. Images can be uploaded for comparison with the transgenic lines. For information, visit http://www.zbbrowser.com/. A Google cardboard VR version is available by clicking “VR” at the website.

To find out more about VARIG, which holds monthly meetings, and to join its LISTSERV, go to https://oir.nih.gov/sigs/virtual-augmented-reality-scientific-interest-group-varig. New members are always welcome.

This page was last updated on Wednesday, April 6, 2022